(Yes indeed, I was trying to match the NonZero example where the author doesn't want to think about what could happen with a non-prime number, but still have no UB. Let me edit to match the actual u32::add().)

In some of my programs, I write the equivalent of safety comments whenever I do arithmetic, to explain why I think the calculation will return the right answer rather than overflowing – from my point of view it's one of the least safe things you can do in safe Rust (other than interacting with the operating system, which can go wrong in all sorts of ways).

This is primarily because I care about correctness in addition to soundness, and arithmetic is a major "correctness hazard". The consequences of an incorrect program are normally lower than the consequences of an unsound one, but they're still high enough that I put effort into avoiding them.

It's possible that the reason I can get away with this because my programs usually aren't particularly arithmetic-heavy, and on occasions where they are, they're normally using some specialised custom numerical type whose wrapping behaviour is part of the program's functionality – I wouldn't be surprised if typical Rust code used arithmetic more heavily and thus writing out the lack-of-overflow proofs would be too much of a burden.

The big difference from my Prime example is that there is no invariant being violated across calls for add, but there is for Prime.

With Prime::new_unchecked, if I make it safe, the problem is that adding this one function affects all other functions and all users, even those that never call Prime::new_unchecked.

Every other function (in the library and outside of the library) has to now document what might happen when a Prime isn't prime, and every user has to consider that possibility in their code dealing with Primes.

I'd much rather make the 1% of users use an unsafe block than force everybody else to deal with the lack of a basic invariant.

Consider how String only allows valid UTF-8. The primary benefit of that is not that some rare function has slightly better performance because it can have UB for invalid UTF-8. I'd argue it's a good idea even if all std functions could deal with invalid strings without UB. The main benefit is that users don't have to worry about how to deal with invalid strings being passed around.

Let's take the other route. If you want have unsafe + performance, then don't have new_unchecked. Only have new that has checks and return Option. User will use unwrap_unchecked to remove the check entirely. It is both unsafe and performant, all invariants inside your prime crate are sustained. The other option is to panic in new - then, to gain performance, user will call the code like unsafe { assume_unchecked(is_prime(val)) } or something along those lines. It will also remove the panic's check.

So the answer to the "is new_unchecked safe or unsafe" is - this method is a bad design choice and should not be there.

I have to disagree with the idea that unsafe should only ever be used for memory safety. While that is true within the standard library, it certainly is not widely applicable to the ecosystem at large.

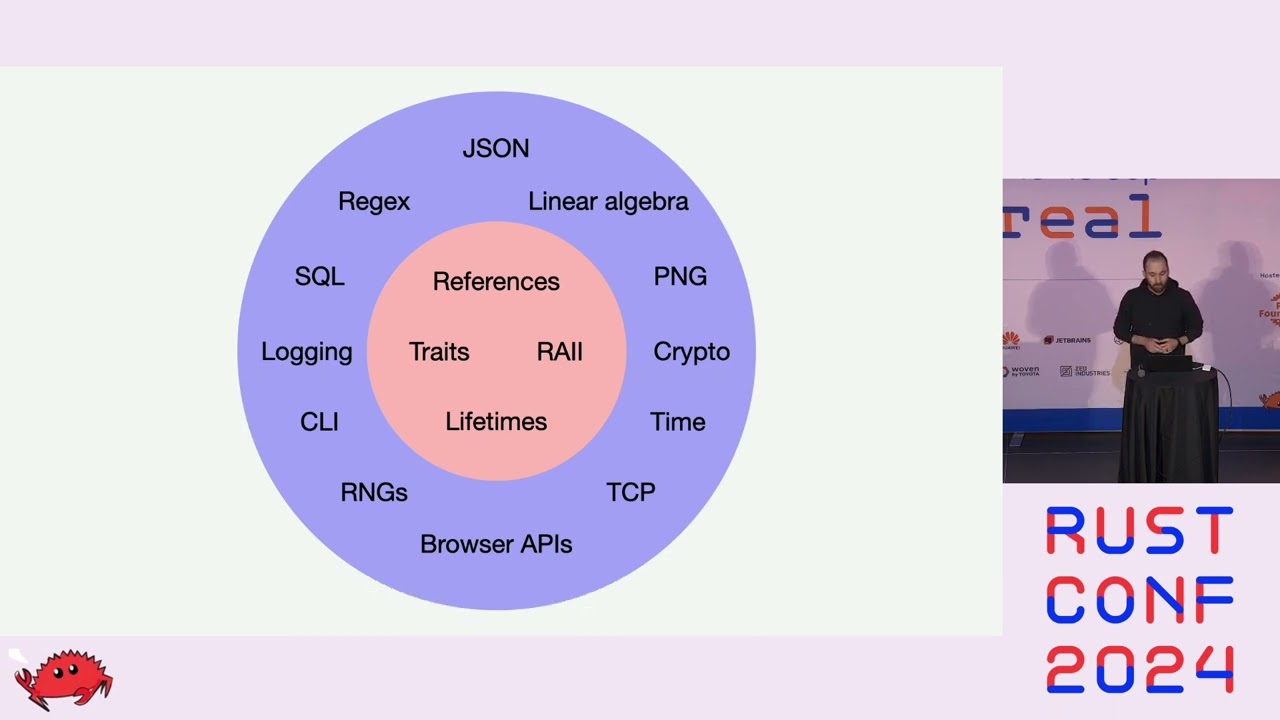

Using unsafe outside memory safety is extremely useful to encode correctness into the type system, as described in this RustConf 2024 talk:

Interesting. I'd rather not do that because I'd have to trust the optimizer to remove a whole primality check, thus to reduce asymptotic complexity of the whole computation from giant to constant time, so this seems brittle performance-wise since optimizations are never guaranteed.

The standard library could do this in many places too, but doesn't:

slice.get_unchecked(i)could beslice.get(i).unwrap_unchecked()NonZero::new_unchecked(x)could beNonZero::new(x).unwrap_unchecked()str::from_utf8_unchecked(v)could bestr::from_utf8(v).unwrap_unchecked()

The last one would also rely heavily on the optimizer.

Here's an example where safe code can break a correctness invariant:

let mut map = BTreeSet::new();

for i in 10..15 {

map.insert(Cell::new(i));

}

let map = map;

map.get(&Cell::new(12)).unwrap().set(99);

let vec: Vec<i32> = map.into_iter().map(Cell::into_inner).collect();

println!("{vec:?}"); // incorrect: [10, 11, 99, 13, 14]

unsafe { assert_unchecked(vec.is_sorted()) }; // UB

The standard library doesn't use an Unspecified section like me, but uses a similar terminology:

The behavior resulting from such a logic error is not specified, but will be encapsulated to the BTreeSet that observed the logic error and not result in undefined behavior. This could include panics, incorrect results, aborts, memory leaks, and non-termination.

Given the author of the talk, I highly suspect that if unsafe is used for correctness, then it's because it's correctness on which unsafe code relies. Not just arbitrary correctness.

I do not find the argument that "unsafe is only for things that can cause AM-level UB" particularly convincing.

A quick search over crates shows that pretty much universally everybody agrees with you and me that potentially-invariant-violating functions are unsafe, even when they don't lead to any language-level UB. Nobody generally seems to explicitly clarify anything about soundness vs correctness of their crate.

ordered_float::NotNan::new_unchecked is unsafe, even though putting NaN in there wouldn't cause any language-level UB in the crate (I think).

vecmap::VecMap::from_vec_unchecked is similarly unsafe, even though putting a vector with duplicates in there would just lead to inconsistent lookups rather than language-level UB.

I see no reason why it wouldn't be applicable. Of course library authors can choose to violate this principle, but that blurs the line of what unsafe code is about so if it happens at scale, I think this is a worse outcome for the ecosystem than the alternative.

I watched that talk and don't remember it suggesting the use of unsafe for non-memory-safety concerns. Could you provide a more specific citation that does not require watching the entire talk (e.g. a timestamp)?

This is an invitation, whether intended or not, for other crates to write functions that take NotNat arguments and use their non-NaN-ness in a soundness-critical way. That's an entirely reasonable thing to do if there is a good usecase for UB-relevant non-NaN-ness, for the same reason that NonZero exists.

Here's a similar function in a rustc-internal type that is safe: SortedMap::insert_presorted. Those two APIs communicate different things about the potential consequences of giving them invalid inputs. VecMap allows UB to happen -- if not today, then maybe in a future version of that crate.

At 18:00 there is an example of using an unsafe trait to define an order between locks for deadlock prevention at compile time. An incorrect implementation (i.e. a cycle between locks) may lead to deadlock (not a language-level UB).

Not necessarily. This is a semver question. It depends if you want the option of providing robustness within the same major version, or if you're fine bumping the major version.

This suggests the main "catch" in this "whether to use unsafe" debate.

For all situations like NonNull and Prime where a newtype is intended to provide only a subset of values that are representable in the underlying type, an author can always justify using unsafe as reserving the right to rely on this for soundness in some future release.

As a trivial example: if some future release of Rust introduced a stable way to describe niches for enum size optimization then I expect I would use it on my newtypes just in case some user of this type finds the size optimization useful, because it's unlikely to impose any significant new constraints on me as a maintainer than I was already accepting for "correctness" or "robustness" in the absence of that optimization.

Since the future is unwritten, any library author can justify treating these constraints as soundness requirements even if they aren't today, by appeal to them potentially becoming soundness requirements in a future minor release. And once they've done that, there's little disadvantage to documenting that unsafe code elsewhere is allowed to rely on it as a soundness guarantee even in the current version, if you're intending to maintain that invariant in all furniture minor releases anyway. ![]()

Ah, fair, good point. Personally I don't quite see why it has to be unsafe to achieve its goal (he even talks later about how the deadlock prevention isn't fully air-tight and just makes it sufficiently hard to accidentally cause a deadlock), but they are indeed using unsafe for non-memory-safety concerns here.

Cc @joshlf

IMO there's a lack of good nomenclature here. I would love for Rust to eventually support user-defined "unsafes", ie unsafe (the one we know and love), unsafe(lock_ordering::Deadlock) (defined by the lock_ordering crate), unsafe(diesel::SqlInjection), etc.

Currently, code can either be safe or unsafe, but nothing in between. One of the reasons that the unsafe concept is so powerful is that it is viral – if you're using it correctly, it forces you to reason about how far it "infects" your codebase. As others have mentioned, it's a powerful tool for code review because anything that's safe is guaranteed to not cause memory safety issues, while anything that's unsafe needs human scrutiny. Once you've (hopefully correctly) scrutinized all of the unsafe, you can be confident that all the safe code you didn't scrutinize is fine.

If you want to get those same benefits for other (non-memory) safety properties, your current option is just to use unsafe, which is obviously not ideal. That's why I used it in the talk – I wanted to convey the idea that we're getting a strong guarantee, and that users who care about deadlock freedom can adopt the same "I only need to scrutinize the unsafe code to be confident my codebase is deadlock-free" approach. That requires using the unsafe keyword. Eventually I hope we can introduce a finer-grained notion of safety so that we can distinguish between types of safety and users can only focus on the types that they care about.

TLDR: I was abusing the unsafe keyword and I did it knowingly ![]()

Having skimmed some but not all of this thread (so apologies if this got covered in the parts I didn't read), I think some framing might be helpful.

@ia0's concept of "robustness" (a thing we're all talking about, but I give @ia0 credit for being the first to try to name it explicitly) is simply beyond the scope of what the community has norms around. With regards to robustness, we're in a similar situation that C was in decades ago before they started to formalize the concept of UB. It's largely vibes right now.

I suspect we'll see a similar trajectory with robustness in Rust to the trajectory of UB in C. That is, it will be a very long and very slow process to move towards a world in which robustness is a first-class concept that:

- Everyone knows about

- The ecosystem has norms about

- The tooling supports as a first-class concept

I just think we're really far from that reality right now. I am the co-maintainer of arguably one of the most paranoid crates in existence, and even we throw up our hands with respect to robustness. Here's a small list of examples of things that could undermine our soundness/cause UB:

- We assume that

syncorrectly parses Rust code - We assume that attribute macros don't do insane things

- We assume that named types don't do insane things

I think a core reason for this disagreement (re: the role of unsafe fields) is that we're on a fundamentally shaky foundation. It's like we're measuring different parts of a room and arguing about whose measurements are wrong when in fact the room's walls aren't perfectly parallel, and so we're all right (or we're all wrong?).

So what do we do about it? The position of the unsafe fields RFC is to make incremental progress without trying to boil the ocean and adjudicate the question of robustness. It moves us towards being able to address robustness by making it easier to reason in a clearer-headed way about module-local safety, but it doesn't actually address the robustness question head-on.

I think we should expect many years of the robustness question being a vaguely-defined thing that frequently confuses unsafe code authors, causes us to talk past each other, etc. Hopefully we'll eventually be able to speak about robustness with language as precise as the language we have for module-local safety today, but we're currently a long way off. But I'm fine with that! It's an exciting way to get to spend a career ![]() .

.

I feel like ultimately, a common use-case for private unsafe fields will be cases where one could have instead also added an additional module/privacy layer instead but that would be too inconvenient.

For example with Vec, you could have an inner module that has basic methods such as unsafe fn from_raw_parts_in and unsafe fn set_len (and probably a few more) that document the necessary safety conditions / invariants; and then additional functionality (i.e. some more of the inherent methods of Vec) can be implemented on top of these outside of this inner module, where the fields of Vec are no longer visible.

This way, everywhere where the invariants must be upheld, an unsafe block must ultimately be used, and it should be easier not to forget about any of the conditions/invariants. (For example, it’s already the case that most (or all?) writes to len do go through set_len; presumably for exactly this reason.)

Putting unsafe on a field is basically equivalent to creating an inner module, re-exporting the type itself from that inner module into the current module, making all the unsafe fields not visible outside of this inner module, and instead providing unsafe accessor functions [for reading[1] and/or writing the field] which document the invariants (this equivalence is perfect only if we ignore the limitations aroung e.g. partial borrows that accessor functions can create). One could even design a macro crate for a similar effect, I suppose, and we wouldn’t need unsafe fields as a language feature at all (still, ignoring the case of partial borrows; which honestly should not be too common, anyway). [Also, interaction with other derive macros is an issue with a macro-based solution.[2]]

though as I noted above, I’m not convinced that

unsafereading is really all that common

and in the rare cases where it does occur, you could always get a similar result, still, by just wrapping the field’s type into anotherUnsafeRead<T>wrapper type that only provides someunsafe fn _(&UnsafeRead<T>) -> &Tmethod for reads, anyway [only if you also need e.g. partial borrows, this might not work so well] ↩︎This made me notice that the current design of

unsafefields with unsafety including reads is also annoying for derive macros; e.g. you can’t even#[derive(Debug)]anymore! ↩︎

Yep ![]() We do exactly that in zerocopy to work around the lack of unsafe fields.

We do exactly that in zerocopy to work around the lack of unsafe fields.

Hahah I was literally talking to @jack about this idea yesterday.

I am not sure I agree that your examples are relevant here. The first one, perhaps, but the latter two are just plain old issue of token-based macro systems being bad. It's not a question of defining robustness, it's a question of macros lacking the expressiveness to define any sort of API contract at all.

Note that this process already started with the work on contracts:

- A function is

unsafeif and only if it has a#[requires]clause. - A function is

robustif and only if it has a#[ensures]clause.

But I agree it's going to take some time until it's usable by third-party crates, and even more time until it's adopted ecosystem-wide.

Actually the inner module solution is more expressive, as you point out, because you can choose which operation(s) is/are unsafe: reading and/or writing. Note that if robust was a keyword, you could have the same expressiveness with fields:

- A field is

unsafeif and only if there are conditions toreadit. - A field is

robustif and only if there are conditions towriteit.

This sounds confusing because unsafe fields today have the semantics of both keywords, and they use the unsafe keyword while the motivation for them comes from the semantics of the robust keyword.

The semantics of those keywords when applied to a variable of a given type with a given invariant, is:

unsafewhen there are values satisfying the invariant of the variable that do not satisfy the safety invariant of the type. Such values are usually called unsafe, for example a non-UTF8 value of typestr, or a function of typefn(i32)that has UB on negative integers.robustwhen there are values satisfying the safety invariant of the type that do not satisfy the invariant of the variable. Such values don't really have a name. Examples areVec::lenor theindexparameter of[T]::get_unchecked().

Again, the work on contracts is going to bypass the need for those keywords. A variable (like a field or struct) annotated with #[invariant] defines the invariant of the variable. One can then simply compare it with the safety invariant of the type of that variable. And by looking at the emptiness of each side of the symmetric difference, one can tell if the variable is unsafe and/or robust.

But until we get there (if we ever do), it's useful to have use crutches like unsafe fields, safety documentation, safety comments, safety guidelines, unsafe reviews, safety tools (dynamic and static), etc.

Indeed; that’s why I’m thinking that unsafe fields make most sense as a convenience feature of sorts, and as such it should support the most common case. IMO it’s going to be much more common that reads (including: taking an immutable reference, making a copy of a Copy field, moving a value out of a field [only works on structs without Drop implementation]) are safe, and only writes (including: taking a mutable reference, overwriting a field with a new value, writing a value into a field that has previously been moved out of [only works on structs without Drop implementation], constructing the struct in the first place) are unsafe.

As I also note (in a footnote), unsafe fields could still help even for the “reads are unsafe” case, too. That is because reads can be prevented by wrapping the field in another helper type. If you wrap your fields in something like

struct Foo {

/// # Safety

/// [explain invariants here, and also

// the conditions for when reads are or aren’t safe]

unsafe field: UnsafeToRead<Bar>,

}

with a repr(transparent) helper type UnsafeToRead that only allows access to its contents via some method like

impl<T> UnsafeToRead<T> {

/// # Safety

/// If `*self` is owned by an (`unsafe`) field in a struct, the safety conditions

/// documented on that field must be upheld when calling `get`!

unsafe fn get(&self) -> &T { /* … */ }

}

A wrapper type alone couldn’t help so well with preventing mutability, since a non-unsafe field can always be replaced by another wrapped value, or e.g. mem::swapped whole between different struct values, etc… but if the language mechanism supports making only writes unsafe with the unsafe field: Type syntax, then all you can do to a wrapped UnsafeToRead<_> field is take a reference to the whole (still wrapped) field, which essentially does nothing. [At least I don’t think there are any additional effects from creating such a reference that wouldn’t already be happening from having (or perhaps re-borrowing) a reference to the whole struct, anyway.]

@scottmcm do you perhaps know/remember[1] whether or not this kind of observation [that – unlike making writes unsafe in current Rust – making reads unsafe (on top of language-feature-supported unsafe writes) can be quite effectively&conveniently achieved through a wrapper type, with relatively few drawbacks?] had even been raised / noticed when the lang team decided/voted in favor of unsafe field being also unsafe to read?

sparing me the need to search through 200+ replies on the RFC, and perhaps even looking for anything extra in terms of discussion from lang-team elsewhere ↩︎